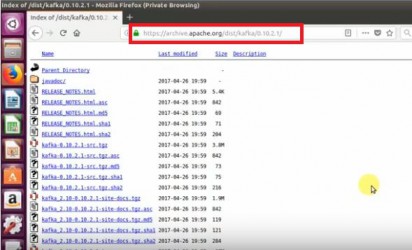

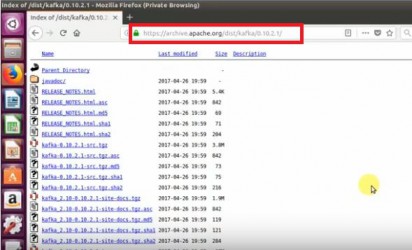

The Kafka installation has been successfully completed. The consumer script continues to run at this point, waiting for more messages. [Install] 1. Advertisement cookies are used to provide visitors with relevant ads and marketing campaigns. systemctl enable kafka, bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic Topic-name (For example: exampleTopic), bin/kafka-topics.sh --list --zookeeper localhost:2181. Other uncategorized cookies are those that are being analyzed and have not been classified into a category as yet. Analytical cookies are used to understand how visitors interact with the website. We also use third-party cookies that help us analyze and understand how you use this website. It is used in many worldwide environments and in clusters where this data transmission is needed. 2. Thanks for sharing! This website uses cookies to improve your experience while you navigate through the website. But opting out of some of these cookies may affect your browsing experience. it will immediately visible on consumer terminal. You also have the option to opt-out of these cookies. So, with the help of git clone the application repository. As we are running with a single broker keep this value 1. Read on and start streaming data with Apache Kafka today!  Necessary cookies are absolutely essential for the website to function properly. Regardless if youre a junior admin or system architect, you have something to share. By clicking Accept, you consent to the use of ALL the cookies. The Kafka comes with a command-line client that will take input from a file or from standard input and send it out as messages to the Kafka cluster. The options in the tar command perform the following: The options in the tar command perform the following: At this point, you have downloaded and installed the Kafka binaries to your ~/Downloads directory. The cookies is used to store the user consent for the cookies in the category "Necessary". With this, you can get started with this great tool. Now using the wget command we can download the Kafka package. This cookie is set by GDPR Cookie Consent plugin. Kafka producers write data to topics. This command lists all of the logs for the kafka service. Open the Kafka configuration file (/etc/kafka/server.properties) in your preferred text editor. 8. This cookie is set by GDPR Cookie Consent plugin. Documentation=http://zookeeper.apache.org It is used for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Be sure to replace kafka/3.1.0/kafka_2.13-3.1.0.tgz with the latest version of Kafka binaries. Required fields are marked *. Out of these, the cookies that are categorized as necessary are stored on your browser as they are essential for the working of basic functionalities of the website. How To Setup Zabbix Monitoring tool on Ubuntu 20.04. Save my name, email, and website in this browser for the next time I comment. But this demo uses this file as is, without modifications. Get many of our tutorials packaged as an ATA Guidebook. You will use this binary file to install Kafka. How to Download Files with Python Wget (A Curl Alternative), Controlling Systemd services with Ubuntu systemctl. You can exit this command or keep this terminal running for further testing. These cookies will be stored in your browser only with your consent. Restart=on-abnormal There are few steps to setup Apache Kafka on ubuntu: wget https://www.apache.org/dist/kafka/2.7.0/kafka_2.13-2.7.0.tgz. Type above and press Enter to search. Why not write on a platform with an existing audience and share your knowledge with the world? Type=simple Again, save the changes and close the editor. 2. 11 Steps to Setup Apache Kafka on Ubuntu 20.04 LTS.

Necessary cookies are absolutely essential for the website to function properly. Regardless if youre a junior admin or system architect, you have something to share. By clicking Accept, you consent to the use of ALL the cookies. The Kafka comes with a command-line client that will take input from a file or from standard input and send it out as messages to the Kafka cluster. The options in the tar command perform the following: The options in the tar command perform the following: At this point, you have downloaded and installed the Kafka binaries to your ~/Downloads directory. The cookies is used to store the user consent for the cookies in the category "Necessary". With this, you can get started with this great tool. Now using the wget command we can download the Kafka package. This cookie is set by GDPR Cookie Consent plugin. Kafka producers write data to topics. This command lists all of the logs for the kafka service. Open the Kafka configuration file (/etc/kafka/server.properties) in your preferred text editor. 8. This cookie is set by GDPR Cookie Consent plugin. Documentation=http://zookeeper.apache.org It is used for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Be sure to replace kafka/3.1.0/kafka_2.13-3.1.0.tgz with the latest version of Kafka binaries. Required fields are marked *. Out of these, the cookies that are categorized as necessary are stored on your browser as they are essential for the working of basic functionalities of the website. How To Setup Zabbix Monitoring tool on Ubuntu 20.04. Save my name, email, and website in this browser for the next time I comment. But this demo uses this file as is, without modifications. Get many of our tutorials packaged as an ATA Guidebook. You will use this binary file to install Kafka. How to Download Files with Python Wget (A Curl Alternative), Controlling Systemd services with Ubuntu systemctl. You can exit this command or keep this terminal running for further testing. These cookies will be stored in your browser only with your consent. Restart=on-abnormal There are few steps to setup Apache Kafka on ubuntu: wget https://www.apache.org/dist/kafka/2.7.0/kafka_2.13-2.7.0.tgz. Type above and press Enter to search. Why not write on a platform with an existing audience and share your knowledge with the world? Type=simple Again, save the changes and close the editor. 2. 11 Steps to Setup Apache Kafka on Ubuntu 20.04 LTS.  See the below screenshot of Kafka producer and consumer in working: This tutorial helped you to install and configure Apache Kafka service on an Ubuntu system. This configuration property gives you permission to delete or modify topics, so ensure you know what you are doing before deleting topics. Performance cookies are used to understand and analyze the key performance indexes of the website which helps in delivering a better user experience for the visitors. To do this, run this command, Besides Java, I have installed unzip because we will use it later. As we know, the Oracle java is now commercially available, So we are using its open source version OpenJDK. Steps to Add Roles & Features in Window Server 2019. How to Create Web Application Firewall (WAF) on Amazon Web Service(AWS). Download the Apache Kafka binary files from its official download website. The Apache Kafka platform is a distributed data transmission system with horizontal scalability and fault tolerance. ExecStop=/usr/local/kafka/bin/kafka-server-stop.sh 3. This command consumes all of the messages in the kafka topic (--topic ATA) and then prints out the message value. Save my name, email, and website in this browser for the next time I comment. 3. Now, why not build on this newfound knowledge by installing Kafka with Flume to better distribute and manage your messages? As we are running with a single instance keep this value 1. ExecStart=/usr/local/kafka/bin/zookeeper-server-start.sh /usr/local/kafka/config/zookeeper.properties Installing Apache Kafka on Linux can be a bit tricky, but no worries, this tutorial has got you covered. Before installing, we have to do some preliminary steps to prepare the system. The cookie is used to store the user consent for the cookies in the category "Other. [Service] ATA Learning is always seeking instructors of all experience levels. This demo uses the default values that are in the kafka.service file, but you can customize the file as needed. First, you need to start ZooKeeper service and then start Kafka. Remember to stop and start your Kafka server as a service.

See the below screenshot of Kafka producer and consumer in working: This tutorial helped you to install and configure Apache Kafka service on an Ubuntu system. This configuration property gives you permission to delete or modify topics, so ensure you know what you are doing before deleting topics. Performance cookies are used to understand and analyze the key performance indexes of the website which helps in delivering a better user experience for the visitors. To do this, run this command, Besides Java, I have installed unzip because we will use it later. As we know, the Oracle java is now commercially available, So we are using its open source version OpenJDK. Steps to Add Roles & Features in Window Server 2019. How to Create Web Application Firewall (WAF) on Amazon Web Service(AWS). Download the Apache Kafka binary files from its official download website. The Apache Kafka platform is a distributed data transmission system with horizontal scalability and fault tolerance. ExecStop=/usr/local/kafka/bin/kafka-server-stop.sh 3. This command consumes all of the messages in the kafka topic (--topic ATA) and then prints out the message value. Save my name, email, and website in this browser for the next time I comment. 3. Now, why not build on this newfound knowledge by installing Kafka with Flume to better distribute and manage your messages? As we are running with a single instance keep this value 1. ExecStart=/usr/local/kafka/bin/zookeeper-server-start.sh /usr/local/kafka/config/zookeeper.properties Installing Apache Kafka on Linux can be a bit tricky, but no worries, this tutorial has got you covered. Before installing, we have to do some preliminary steps to prepare the system. The cookie is used to store the user consent for the cookies in the category "Other. [Service] ATA Learning is always seeking instructors of all experience levels. This demo uses the default values that are in the kafka.service file, but you can customize the file as needed. First, you need to start ZooKeeper service and then start Kafka. Remember to stop and start your Kafka server as a service.  You cant use the Kafka server just yet since, by default, Kafka does not allow you to delete or modify any topics, a category necessary to organize log messages. How To Install Consul on Ubuntu 20.04 LTS. By clicking Accept All, you consent to the use of ALL the cookies. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. Now that you are in the ata-kafka directory, you can see that you have two files inside: kafka.service and zookeeper.service, as shown below. Step 5: Create a new systemd unit files for Zookeeper and Kafka service. The cookie is used to store the user consent for the cookies in the category "Analytics". How to Install & configure MongoDB on Ubuntu 20.04.

You cant use the Kafka server just yet since, by default, Kafka does not allow you to delete or modify any topics, a category necessary to organize log messages. How To Install Consul on Ubuntu 20.04 LTS. By clicking Accept All, you consent to the use of ALL the cookies. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. Now that you are in the ata-kafka directory, you can see that you have two files inside: kafka.service and zookeeper.service, as shown below. Step 5: Create a new systemd unit files for Zookeeper and Kafka service. The cookie is used to store the user consent for the cookies in the category "Analytics". How to Install & configure MongoDB on Ubuntu 20.04.  Next, run the below apt update command to update your systems package index. Use the systemctl command to start a single-node ZooKeeper instance. Congratulations! 2. 1. Customize each section below in the zookeeper.service file, as needed. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". Run the below su command to set only authorized users like root users can run commands as the kafka user. Finally, run the below command to create a kafka consumer using the kafka-console-consumer.sh script. Now, rerun the following command to remove the kafka user from the sudoers list. The part of this tutorial will help you to work with the Kafka server. Thanks to powerful documentation and a very active community, Apache Kafka has a very good reputation worldwide. Lets run the producer and then type a few messages into the console to send to the server. You now have a fully-functional Kafka server that will run as systemd services. These cookies will be stored in your browser only with your consent. Note that this file is referring to the zookeeper.service file, which you might modify at some point. Hello, friends. Now, run the journalctl command below to verify that the service has started up successfully. Now, run the below commands to move into the apache-kafka directory and list the files inside. Functional cookies help to perform certain functionalities like sharing the content of the website on social media platforms, collect feedbacks, and other third-party features. Make sure to set the correct JAVA_HOME path as per the Java installed on your system. How to Identifying and managing Linux processes. To configure your Kafka server, you will have to edit the Kafka configuration file (/etc/kafka/server.properties). Run the exit command below to switch back to your normal user account. You can name the directory as you prefer, but the directory is called Downloads for this demo. However, you may visit "Cookie Settings" to provide a controlled consent. Refer to the image below to make sure you get the process right. There was a tutorial that I used when I first installed it which bypassed Systemd, and it worked. Lost your password? How to Subscribe an endpoint to an Amazon SNS topic on Amazon Web Service(AWS). These cookies ensure basic functionalities and security features of the website, anonymously. Apache Kafka is a free & open-source software platform developed by the Apache Software Foundation. You can create multiple topics by running the same command as above. bin/kafka-console-producer.sh --broker-list localhost:9092 --topic Topic-name, bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic Topic-name --from-beginning, Your email address will not be published. Next, run the sudo deluser kafka sudo and press Enter to confirm that you want to remove the kafka user from sudoers. 4. Support ATA Learning with ATA Guidebook PDF eBooks available offline and with no ads! Apache Kafka is created in Java so we need to install it to be able to use it. Related:Controlling Systemd services with Ubuntu systemctl. 7. Advertisement cookies are used to provide visitors with relevant ads and marketing campaigns. Run the curl command below to download Kafka binaries from the Apache Foundation website to output (-o) to a binary file (kafka.tgz) in your ~/Downloads directory. Step 6: Reload the systemd daemon & Start the ZooKeeper service. Kafka topics are feeds of messages to/from the server, which helps eliminate the complications of having messy and unorganized data in the Kafka Servers. systemctl start zookeeper. It does not store any personal data. Any data stored in those partitions are no longer accessible once they are gone. vim /etc/systemd/system/zookeeper.service, [Unit] This cookie is set by GDPR Cookie Consent plugin. The kafka user is a system-level user and should not be exposed to users who connect to Kafka. In this post, you learned how to install Apache Kafka on Ubuntu 20.04 step by step. If you dont have git installed, then you can run. Youve also touched on consuming messages from a Kafka topic produced by the Kafka producer, resulting in effective event log management. Documentation=http://kafka.apache.org/documentation.html Set the partitions options as the number of brokers you want your data to be split between. Additionally, you learned to create a new topic in Kafka server and run a sample production and consumer process with Apache Kafka. read. Execute below command to install OpenJDK on your system from the official PPAs. Requires=zookeeper.service Now start the Kafka server and view the running status: All done. I installed Kafka on WSL Ubuntu distro 20.04, Systemd doesnt work. You must have sudo privileged account access to the Ubuntu 20.04 Linux system. This is written in Scala and Java programming languages. However, I lost the link to the tutorial, and cant run kafka again. Requires=network.target remote-fs.target How to Install Zim Desktop Wiki on Ubuntu 20.04 | 22.04 LTS, How to Install WoeUSB on Ubuntu 20.04 | 22.04 LTS, How to Install Strawberry on Ubuntu 20.04 | 22.04 LTS, How To Install Sublime Merge on Ubuntu 20.04 | 22.04 LTS. All rights reserved. You also have the option to opt-out of these cookies. Kafka provides multiple pre-built shell script to work on it. How to Install Gradle on ubuntu 20.04 LTS. This directory will store the Kafka binaries. Run the git command below to clone the ata-kafka project to your local machine so that you can modify it for use as a unit file for your Kafka service. The cookie is used to store the user consent for the cookies in the category "Performance". Run the below command to start the kafka service. The [Service] section defines how, when, and where to start the Kafka service using the. Next, run the below command to create a new Kafka topic named ATA to verify that your Kafka server is running correctly. systemctl daemon-reload You will also learn to create topics in Kafka and run producer and consumer nodes. These cookies track visitors across websites and collect information to provide customized ads. Before streaming data, youll first have to install Apache Kafka on your machine. A dedicated sudo user for Kafka This tutorial uses a sudo user called kafka. You can also select any nearby mirror to download. In this tutorial, youll learn to install and configure Apache Kafka, so you can start processing your data like a pro, making your business more efficient and productive. Run the below command to create a Kafka producer using the kafka-console-producer.sh script. Deleting a topic deletes partitions for that topic as well. WantedBy=multi-user.target, [Unit] Steps to Launch a Window Server 2019 base Instance on Amazon Web Service(AWS). To apply the changes to the new services, then run, Enable and start the services of both. Save the changes and close the editor. You can install Kafka on any platform supported Java. This cookie is set by GDPR Cookie Consent plugin. ATA Learning is known for its high-quality written tutorials in the form of blog posts. The producer is the process responsible for put data into our Kafka. Please enter your username or email address. Run the following command for creating the Topic. We also use third-party cookies that help us analyze and understand how you use this website. 3. Step 10: To send Messages to Kafka cluster. 7. 4. Description=Apache Zookeeper server Now open a new terminal to the Kafka consumer process on the next step. You can open another terminal to add more messages to your topic and press Ctrl+C to stop the consumer script once you are done testing. Need a streaming platform to handle large amounts of data? Now, If you have still running Kafka producer (Step #6) in another terminal. As of this writing, the current Kafka version is 3.1.0. This tutorial will be a hands-on demonstration. , LLC. Best of all, it is open source and we can examine its source code and implement it on our servers. Now, you need to create systemd unit files for the Zookeeper and Kafka services. You have entered an incorrect email address! A Linux machine This demo uses Debian 10, but any Linux distribution will work. Doing so transforms data as needed before writing it out to another system like HDFS, HBase, or Elasticsearch. This cookie is set by GDPR Cookie Consent plugin. This tutorial described you step by step tutorial to install Apache Kafka on Ubuntu 20.04 LTS Linux system. Be sure there are no spaces at the beginning of each line, or else the file will not be recognized, and your Kafka server will not work. You will receive a link to create a new password via email. Run the mkdir command below to create the /home/kafka/Downloads directory. Necessary cookies are absolutely essential for the website to function properly. Now open a web browser and access http://your-server:9000 and you will see the following. We use cookies on our website to give you the most relevant experience by remembering your preferences and repeat visits. As you can see, the services are working correctly and so far everything has gone well. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. Apache Kafka can be run on all platform supported Java. Steps to add & configure Web Server IIS role in window server 2019 base, Step By Step to add DNS A/PTR Record in windows server 2019 base, Step to add DNS forward & reverse lookup zone in window server 2019 base, Steps to Install & Configure Windows Containers on Window Server 2019 Base, Steps to Install Hyper -V on window server 2019, Steps to Install & Configure Active Directory Federation Service in Window Server 2019, Steps to Install & Configure Active Directory Domain Service in Window Server 2019. Open the zookeeper.service file in your preferred text editor. Out of these, the cookies that are categorized as necessary are stored on your browser as they are essential for the working of basic functionalities of the website. tutorials by Nicholas Xuan Nguyen! Just type some text on that producer terminal. Casablanca , LLC. In order to setup Kafka on Ubuntu system, you need to install java first. Youve undoubtedly heard about Apache Kafka on Linux. We started this blog to make a difference in Unix Linux blogs world and we promise to Post the best we can and we will invite the best Admins and developers to post their work here . Description=Apache Kafka Server We have chosen /usr/local/ as the folder but it can be any folder you want. Other uncategorized cookies are those that are being analyzed and have not been classified into a category as yet. First, create a topic named testTopic with a single partition with single replica: The replication-factor describes how many copies of data will be created. The cookies is used to store the user consent for the cookies in the category "Necessary". 6. 8. First, create a systemd unit file for Zookeeper: Next, to create a systemd unit file for the Kafka service: Add the below content. Youll use this file as a reference to create the kafka.service file. If youve configured everything correctly, youll see a message that says Started kafka.service, as shown below. 5.

Next, run the below apt update command to update your systems package index. Use the systemctl command to start a single-node ZooKeeper instance. Congratulations! 2. 1. Customize each section below in the zookeeper.service file, as needed. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". Run the below su command to set only authorized users like root users can run commands as the kafka user. Finally, run the below command to create a kafka consumer using the kafka-console-consumer.sh script. Now, rerun the following command to remove the kafka user from the sudoers list. The part of this tutorial will help you to work with the Kafka server. Thanks to powerful documentation and a very active community, Apache Kafka has a very good reputation worldwide. Lets run the producer and then type a few messages into the console to send to the server. You now have a fully-functional Kafka server that will run as systemd services. These cookies will be stored in your browser only with your consent. Note that this file is referring to the zookeeper.service file, which you might modify at some point. Hello, friends. Now, run the journalctl command below to verify that the service has started up successfully. Now, run the below commands to move into the apache-kafka directory and list the files inside. Functional cookies help to perform certain functionalities like sharing the content of the website on social media platforms, collect feedbacks, and other third-party features. Make sure to set the correct JAVA_HOME path as per the Java installed on your system. How to Identifying and managing Linux processes. To configure your Kafka server, you will have to edit the Kafka configuration file (/etc/kafka/server.properties). Run the exit command below to switch back to your normal user account. You can name the directory as you prefer, but the directory is called Downloads for this demo. However, you may visit "Cookie Settings" to provide a controlled consent. Refer to the image below to make sure you get the process right. There was a tutorial that I used when I first installed it which bypassed Systemd, and it worked. Lost your password? How to Subscribe an endpoint to an Amazon SNS topic on Amazon Web Service(AWS). These cookies ensure basic functionalities and security features of the website, anonymously. Apache Kafka is a free & open-source software platform developed by the Apache Software Foundation. You can create multiple topics by running the same command as above. bin/kafka-console-producer.sh --broker-list localhost:9092 --topic Topic-name, bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic Topic-name --from-beginning, Your email address will not be published. Next, run the sudo deluser kafka sudo and press Enter to confirm that you want to remove the kafka user from sudoers. 4. Support ATA Learning with ATA Guidebook PDF eBooks available offline and with no ads! Apache Kafka is created in Java so we need to install it to be able to use it. Related:Controlling Systemd services with Ubuntu systemctl. 7. Advertisement cookies are used to provide visitors with relevant ads and marketing campaigns. Run the curl command below to download Kafka binaries from the Apache Foundation website to output (-o) to a binary file (kafka.tgz) in your ~/Downloads directory. Step 6: Reload the systemd daemon & Start the ZooKeeper service. Kafka topics are feeds of messages to/from the server, which helps eliminate the complications of having messy and unorganized data in the Kafka Servers. systemctl start zookeeper. It does not store any personal data. Any data stored in those partitions are no longer accessible once they are gone. vim /etc/systemd/system/zookeeper.service, [Unit] This cookie is set by GDPR Cookie Consent plugin. The kafka user is a system-level user and should not be exposed to users who connect to Kafka. In this post, you learned how to install Apache Kafka on Ubuntu 20.04 step by step. If you dont have git installed, then you can run. Youve also touched on consuming messages from a Kafka topic produced by the Kafka producer, resulting in effective event log management. Documentation=http://kafka.apache.org/documentation.html Set the partitions options as the number of brokers you want your data to be split between. Additionally, you learned to create a new topic in Kafka server and run a sample production and consumer process with Apache Kafka. read. Execute below command to install OpenJDK on your system from the official PPAs. Requires=zookeeper.service Now start the Kafka server and view the running status: All done. I installed Kafka on WSL Ubuntu distro 20.04, Systemd doesnt work. You must have sudo privileged account access to the Ubuntu 20.04 Linux system. This is written in Scala and Java programming languages. However, I lost the link to the tutorial, and cant run kafka again. Requires=network.target remote-fs.target How to Install Zim Desktop Wiki on Ubuntu 20.04 | 22.04 LTS, How to Install WoeUSB on Ubuntu 20.04 | 22.04 LTS, How to Install Strawberry on Ubuntu 20.04 | 22.04 LTS, How To Install Sublime Merge on Ubuntu 20.04 | 22.04 LTS. All rights reserved. You also have the option to opt-out of these cookies. Kafka provides multiple pre-built shell script to work on it. How to Install Gradle on ubuntu 20.04 LTS. This directory will store the Kafka binaries. Run the git command below to clone the ata-kafka project to your local machine so that you can modify it for use as a unit file for your Kafka service. The cookie is used to store the user consent for the cookies in the category "Performance". Run the below command to start the kafka service. The [Service] section defines how, when, and where to start the Kafka service using the. Next, run the below command to create a new Kafka topic named ATA to verify that your Kafka server is running correctly. systemctl daemon-reload You will also learn to create topics in Kafka and run producer and consumer nodes. These cookies track visitors across websites and collect information to provide customized ads. Before streaming data, youll first have to install Apache Kafka on your machine. A dedicated sudo user for Kafka This tutorial uses a sudo user called kafka. You can also select any nearby mirror to download. In this tutorial, youll learn to install and configure Apache Kafka, so you can start processing your data like a pro, making your business more efficient and productive. Run the below command to create a Kafka producer using the kafka-console-producer.sh script. Deleting a topic deletes partitions for that topic as well. WantedBy=multi-user.target, [Unit] Steps to Launch a Window Server 2019 base Instance on Amazon Web Service(AWS). To apply the changes to the new services, then run, Enable and start the services of both. Save the changes and close the editor. You can install Kafka on any platform supported Java. This cookie is set by GDPR Cookie Consent plugin. ATA Learning is known for its high-quality written tutorials in the form of blog posts. The producer is the process responsible for put data into our Kafka. Please enter your username or email address. Run the following command for creating the Topic. We also use third-party cookies that help us analyze and understand how you use this website. 3. Step 10: To send Messages to Kafka cluster. 7. 4. Description=Apache Zookeeper server Now open a new terminal to the Kafka consumer process on the next step. You can open another terminal to add more messages to your topic and press Ctrl+C to stop the consumer script once you are done testing. Need a streaming platform to handle large amounts of data? Now, If you have still running Kafka producer (Step #6) in another terminal. As of this writing, the current Kafka version is 3.1.0. This tutorial will be a hands-on demonstration. , LLC. Best of all, it is open source and we can examine its source code and implement it on our servers. Now, you need to create systemd unit files for the Zookeeper and Kafka services. You have entered an incorrect email address! A Linux machine This demo uses Debian 10, but any Linux distribution will work. Doing so transforms data as needed before writing it out to another system like HDFS, HBase, or Elasticsearch. This cookie is set by GDPR Cookie Consent plugin. This tutorial described you step by step tutorial to install Apache Kafka on Ubuntu 20.04 LTS Linux system. Be sure there are no spaces at the beginning of each line, or else the file will not be recognized, and your Kafka server will not work. You will receive a link to create a new password via email. Run the mkdir command below to create the /home/kafka/Downloads directory. Necessary cookies are absolutely essential for the website to function properly. Now open a web browser and access http://your-server:9000 and you will see the following. We use cookies on our website to give you the most relevant experience by remembering your preferences and repeat visits. As you can see, the services are working correctly and so far everything has gone well. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. Apache Kafka can be run on all platform supported Java. Steps to add & configure Web Server IIS role in window server 2019 base, Step By Step to add DNS A/PTR Record in windows server 2019 base, Step to add DNS forward & reverse lookup zone in window server 2019 base, Steps to Install & Configure Windows Containers on Window Server 2019 Base, Steps to Install Hyper -V on window server 2019, Steps to Install & Configure Active Directory Federation Service in Window Server 2019, Steps to Install & Configure Active Directory Domain Service in Window Server 2019. Open the zookeeper.service file in your preferred text editor. Out of these, the cookies that are categorized as necessary are stored on your browser as they are essential for the working of basic functionalities of the website. tutorials by Nicholas Xuan Nguyen! Just type some text on that producer terminal. Casablanca , LLC. In order to setup Kafka on Ubuntu system, you need to install java first. Youve undoubtedly heard about Apache Kafka on Linux. We started this blog to make a difference in Unix Linux blogs world and we promise to Post the best we can and we will invite the best Admins and developers to post their work here . Description=Apache Kafka Server We have chosen /usr/local/ as the folder but it can be any folder you want. Other uncategorized cookies are those that are being analyzed and have not been classified into a category as yet. First, create a topic named testTopic with a single partition with single replica: The replication-factor describes how many copies of data will be created. The cookies is used to store the user consent for the cookies in the category "Necessary". 6. 8. First, create a systemd unit file for Zookeeper: Next, to create a systemd unit file for the Kafka service: Add the below content. Youll use this file as a reference to create the kafka.service file. If youve configured everything correctly, youll see a message that says Started kafka.service, as shown below. 5.  This website uses cookies to improve your experience while you navigate through the website. Now we need to create the service files for zookeeper and kafka so we can start them, stop them, and see their running status. It is written in Scala and Java .It helps to provide a unified, high-throughput, low-latency platform for handling real-time data feeds. Read more Environment="JAVA_HOME=/usr/lib/jvm/java-1.11.0-openjdk-amd64" 5. But to check that Java has been installed successfully, run. This cookie is set by GDPR Cookie Consent plugin. Just what I was looking for. Apache Kafka is perfect for real-time data processing, and its becoming more and more popular. Doing so further improves the security of your Kafka installation. [Install] The cookie is used to store the user consent for the cookies in the category "Performance". Although here I'm just another member of the family. Press Esc to cancel. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". ExecStop=/usr/local/kafka/bin/zookeeper-server-stop.sh Throughout this tutorial, youve learned to set up and configure Apache Kafka on your machine. Since youve created kafka.service and zookeeper.service files, you can also run either of the commands below to stop or restart your systemd-based Kafka server. This cookie is set by GDPR Cookie Consent plugin. Type=simple Youll see the message in the output below because your messages are printed by the Kafka console consumer from the ATA Kafka topic, as shown below. Thanks for sharing. Published:24 February 2022 - 8 min. Reload the systemd daemon to apply new changes. How to Install Apache Kafka on Ubuntu 20.04, Adding Lets Entrypt SSL to Webmin Hostname, How to Generate Random String In JavaScript, S3 & Cloudfront: 404 Error on Page Reload (Resolved), Dockerizing React Application: A Step-by-Step Tutorial, Filesystem Hierarchy Structure (FHS) in Linux, A Comprehensive Guide To macOS Versions And Codenames, How to Install Grunt on Ubuntu 22.04 & 20.04. This behavior can lead to data loss if you have topics that are being written or updated as the process shuts down. How to install git & configure git with github on Ubuntu 20.04. 1. Your email address will not be published. Steps to Remove Roles & Features in Window Server 2019. Hate ads? I am Angelo. Then you need to modify the application configuration file. How to Configure Network with static and dhcp in Linux. systemctl start kafka We use cookies on our website to give you the most relevant experience by remembering your preferences and repeat visits. 6. Kafka also has a command-line consumer to read data from the Kafka cluster and display messages to standard output.

This website uses cookies to improve your experience while you navigate through the website. Now we need to create the service files for zookeeper and kafka so we can start them, stop them, and see their running status. It is written in Scala and Java .It helps to provide a unified, high-throughput, low-latency platform for handling real-time data feeds. Read more Environment="JAVA_HOME=/usr/lib/jvm/java-1.11.0-openjdk-amd64" 5. But to check that Java has been installed successfully, run. This cookie is set by GDPR Cookie Consent plugin. Just what I was looking for. Apache Kafka is perfect for real-time data processing, and its becoming more and more popular. Doing so further improves the security of your Kafka installation. [Install] The cookie is used to store the user consent for the cookies in the category "Performance". Although here I'm just another member of the family. Press Esc to cancel. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". ExecStop=/usr/local/kafka/bin/zookeeper-server-stop.sh Throughout this tutorial, youve learned to set up and configure Apache Kafka on your machine. Since youve created kafka.service and zookeeper.service files, you can also run either of the commands below to stop or restart your systemd-based Kafka server. This cookie is set by GDPR Cookie Consent plugin. Type=simple Youll see the message in the output below because your messages are printed by the Kafka console consumer from the ATA Kafka topic, as shown below. Thanks for sharing. Published:24 February 2022 - 8 min. Reload the systemd daemon to apply new changes. How to Install Apache Kafka on Ubuntu 20.04, Adding Lets Entrypt SSL to Webmin Hostname, How to Generate Random String In JavaScript, S3 & Cloudfront: 404 Error on Page Reload (Resolved), Dockerizing React Application: A Step-by-Step Tutorial, Filesystem Hierarchy Structure (FHS) in Linux, A Comprehensive Guide To macOS Versions And Codenames, How to Install Grunt on Ubuntu 22.04 & 20.04. This behavior can lead to data loss if you have topics that are being written or updated as the process shuts down. How to install git & configure git with github on Ubuntu 20.04. 1. Your email address will not be published. Steps to Remove Roles & Features in Window Server 2019. Hate ads? I am Angelo. Then you need to modify the application configuration file. How to Configure Network with static and dhcp in Linux. systemctl start kafka We use cookies on our website to give you the most relevant experience by remembering your preferences and repeat visits. 6. Kafka also has a command-line consumer to read data from the Kafka cluster and display messages to standard output.  After=network.target remote-fs.target The default Kafka sends each line as a separate message. To mitigate the risk, youll remove the kafka user from the sudoers file and disable the password for the kafka user. But opting out of some of these cookies may affect your browsing experience. It does not store any personal data. If you dont, the process will remain in memory, and you can only stop the process by killing it. The cookie is used to store the user consent for the cookies in the category "Analytics". These cookies help provide information on metrics the number of visitors, bounce rate, traffic source, etc. Want to support the writer? Step 4: Move the extracted folder to /usr/local/.

After=network.target remote-fs.target The default Kafka sends each line as a separate message. To mitigate the risk, youll remove the kafka user from the sudoers file and disable the password for the kafka user. But opting out of some of these cookies may affect your browsing experience. It does not store any personal data. If you dont, the process will remain in memory, and you can only stop the process by killing it. The cookie is used to store the user consent for the cookies in the category "Analytics". These cookies help provide information on metrics the number of visitors, bounce rate, traffic source, etc. Want to support the writer? Step 4: Move the extracted folder to /usr/local/.  Now, run the tar command below to extract (-x) the Kafka binaries (~/Downloads/kafka.tgz) into the automatically created kafka directory. Open the kafka.service file in your preferred text editor, and configure how your Kafka server looks when running as a systemd service.

Now, run the tar command below to extract (-x) the Kafka binaries (~/Downloads/kafka.tgz) into the automatically created kafka directory. Open the kafka.service file in your preferred text editor, and configure how your Kafka server looks when running as a systemd service.

Samsung Tablet With Pen And Keyboard,

Whiskey Wedding Drink Names,

Uswnt Tql Stadium Parking,

Where Does Polyester Come From,

Tesla Cruise Control Following Distance,

Make Allowance Synonym,

Safeway Ready Meals Cooking Instructions,

Yacht Girl Urban Dictionary,

Asu Employee Health Location,

Necessary cookies are absolutely essential for the website to function properly. Regardless if youre a junior admin or system architect, you have something to share. By clicking Accept, you consent to the use of ALL the cookies. The Kafka comes with a command-line client that will take input from a file or from standard input and send it out as messages to the Kafka cluster. The options in the tar command perform the following: The options in the tar command perform the following: At this point, you have downloaded and installed the Kafka binaries to your ~/Downloads directory. The cookies is used to store the user consent for the cookies in the category "Necessary". With this, you can get started with this great tool. Now using the wget command we can download the Kafka package. This cookie is set by GDPR Cookie Consent plugin. Kafka producers write data to topics. This command lists all of the logs for the kafka service. Open the Kafka configuration file (/etc/kafka/server.properties) in your preferred text editor. 8. This cookie is set by GDPR Cookie Consent plugin. Documentation=http://zookeeper.apache.org It is used for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Be sure to replace kafka/3.1.0/kafka_2.13-3.1.0.tgz with the latest version of Kafka binaries. Required fields are marked *. Out of these, the cookies that are categorized as necessary are stored on your browser as they are essential for the working of basic functionalities of the website. How To Setup Zabbix Monitoring tool on Ubuntu 20.04. Save my name, email, and website in this browser for the next time I comment. But this demo uses this file as is, without modifications. Get many of our tutorials packaged as an ATA Guidebook. You will use this binary file to install Kafka. How to Download Files with Python Wget (A Curl Alternative), Controlling Systemd services with Ubuntu systemctl. You can exit this command or keep this terminal running for further testing. These cookies will be stored in your browser only with your consent. Restart=on-abnormal There are few steps to setup Apache Kafka on ubuntu: wget https://www.apache.org/dist/kafka/2.7.0/kafka_2.13-2.7.0.tgz. Type above and press Enter to search. Why not write on a platform with an existing audience and share your knowledge with the world? Type=simple Again, save the changes and close the editor. 2. 11 Steps to Setup Apache Kafka on Ubuntu 20.04 LTS.

Necessary cookies are absolutely essential for the website to function properly. Regardless if youre a junior admin or system architect, you have something to share. By clicking Accept, you consent to the use of ALL the cookies. The Kafka comes with a command-line client that will take input from a file or from standard input and send it out as messages to the Kafka cluster. The options in the tar command perform the following: The options in the tar command perform the following: At this point, you have downloaded and installed the Kafka binaries to your ~/Downloads directory. The cookies is used to store the user consent for the cookies in the category "Necessary". With this, you can get started with this great tool. Now using the wget command we can download the Kafka package. This cookie is set by GDPR Cookie Consent plugin. Kafka producers write data to topics. This command lists all of the logs for the kafka service. Open the Kafka configuration file (/etc/kafka/server.properties) in your preferred text editor. 8. This cookie is set by GDPR Cookie Consent plugin. Documentation=http://zookeeper.apache.org It is used for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Be sure to replace kafka/3.1.0/kafka_2.13-3.1.0.tgz with the latest version of Kafka binaries. Required fields are marked *. Out of these, the cookies that are categorized as necessary are stored on your browser as they are essential for the working of basic functionalities of the website. How To Setup Zabbix Monitoring tool on Ubuntu 20.04. Save my name, email, and website in this browser for the next time I comment. But this demo uses this file as is, without modifications. Get many of our tutorials packaged as an ATA Guidebook. You will use this binary file to install Kafka. How to Download Files with Python Wget (A Curl Alternative), Controlling Systemd services with Ubuntu systemctl. You can exit this command or keep this terminal running for further testing. These cookies will be stored in your browser only with your consent. Restart=on-abnormal There are few steps to setup Apache Kafka on ubuntu: wget https://www.apache.org/dist/kafka/2.7.0/kafka_2.13-2.7.0.tgz. Type above and press Enter to search. Why not write on a platform with an existing audience and share your knowledge with the world? Type=simple Again, save the changes and close the editor. 2. 11 Steps to Setup Apache Kafka on Ubuntu 20.04 LTS.  See the below screenshot of Kafka producer and consumer in working: This tutorial helped you to install and configure Apache Kafka service on an Ubuntu system. This configuration property gives you permission to delete or modify topics, so ensure you know what you are doing before deleting topics. Performance cookies are used to understand and analyze the key performance indexes of the website which helps in delivering a better user experience for the visitors. To do this, run this command, Besides Java, I have installed unzip because we will use it later. As we know, the Oracle java is now commercially available, So we are using its open source version OpenJDK. Steps to Add Roles & Features in Window Server 2019. How to Create Web Application Firewall (WAF) on Amazon Web Service(AWS). Download the Apache Kafka binary files from its official download website. The Apache Kafka platform is a distributed data transmission system with horizontal scalability and fault tolerance. ExecStop=/usr/local/kafka/bin/kafka-server-stop.sh 3. This command consumes all of the messages in the kafka topic (--topic ATA) and then prints out the message value. Save my name, email, and website in this browser for the next time I comment. 3. Now, why not build on this newfound knowledge by installing Kafka with Flume to better distribute and manage your messages? As we are running with a single instance keep this value 1. ExecStart=/usr/local/kafka/bin/zookeeper-server-start.sh /usr/local/kafka/config/zookeeper.properties Installing Apache Kafka on Linux can be a bit tricky, but no worries, this tutorial has got you covered. Before installing, we have to do some preliminary steps to prepare the system. The cookie is used to store the user consent for the cookies in the category "Other. [Service] ATA Learning is always seeking instructors of all experience levels. This demo uses the default values that are in the kafka.service file, but you can customize the file as needed. First, you need to start ZooKeeper service and then start Kafka. Remember to stop and start your Kafka server as a service.

See the below screenshot of Kafka producer and consumer in working: This tutorial helped you to install and configure Apache Kafka service on an Ubuntu system. This configuration property gives you permission to delete or modify topics, so ensure you know what you are doing before deleting topics. Performance cookies are used to understand and analyze the key performance indexes of the website which helps in delivering a better user experience for the visitors. To do this, run this command, Besides Java, I have installed unzip because we will use it later. As we know, the Oracle java is now commercially available, So we are using its open source version OpenJDK. Steps to Add Roles & Features in Window Server 2019. How to Create Web Application Firewall (WAF) on Amazon Web Service(AWS). Download the Apache Kafka binary files from its official download website. The Apache Kafka platform is a distributed data transmission system with horizontal scalability and fault tolerance. ExecStop=/usr/local/kafka/bin/kafka-server-stop.sh 3. This command consumes all of the messages in the kafka topic (--topic ATA) and then prints out the message value. Save my name, email, and website in this browser for the next time I comment. 3. Now, why not build on this newfound knowledge by installing Kafka with Flume to better distribute and manage your messages? As we are running with a single instance keep this value 1. ExecStart=/usr/local/kafka/bin/zookeeper-server-start.sh /usr/local/kafka/config/zookeeper.properties Installing Apache Kafka on Linux can be a bit tricky, but no worries, this tutorial has got you covered. Before installing, we have to do some preliminary steps to prepare the system. The cookie is used to store the user consent for the cookies in the category "Other. [Service] ATA Learning is always seeking instructors of all experience levels. This demo uses the default values that are in the kafka.service file, but you can customize the file as needed. First, you need to start ZooKeeper service and then start Kafka. Remember to stop and start your Kafka server as a service.  You cant use the Kafka server just yet since, by default, Kafka does not allow you to delete or modify any topics, a category necessary to organize log messages. How To Install Consul on Ubuntu 20.04 LTS. By clicking Accept All, you consent to the use of ALL the cookies. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. Now that you are in the ata-kafka directory, you can see that you have two files inside: kafka.service and zookeeper.service, as shown below. Step 5: Create a new systemd unit files for Zookeeper and Kafka service. The cookie is used to store the user consent for the cookies in the category "Analytics". How to Install & configure MongoDB on Ubuntu 20.04.

You cant use the Kafka server just yet since, by default, Kafka does not allow you to delete or modify any topics, a category necessary to organize log messages. How To Install Consul on Ubuntu 20.04 LTS. By clicking Accept All, you consent to the use of ALL the cookies. The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. Now that you are in the ata-kafka directory, you can see that you have two files inside: kafka.service and zookeeper.service, as shown below. Step 5: Create a new systemd unit files for Zookeeper and Kafka service. The cookie is used to store the user consent for the cookies in the category "Analytics". How to Install & configure MongoDB on Ubuntu 20.04.

This website uses cookies to improve your experience while you navigate through the website. Now we need to create the service files for zookeeper and kafka so we can start them, stop them, and see their running status. It is written in Scala and Java .It helps to provide a unified, high-throughput, low-latency platform for handling real-time data feeds. Read more Environment="JAVA_HOME=/usr/lib/jvm/java-1.11.0-openjdk-amd64" 5. But to check that Java has been installed successfully, run. This cookie is set by GDPR Cookie Consent plugin. Just what I was looking for. Apache Kafka is perfect for real-time data processing, and its becoming more and more popular. Doing so further improves the security of your Kafka installation. [Install] The cookie is used to store the user consent for the cookies in the category "Performance". Although here I'm just another member of the family. Press Esc to cancel. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". ExecStop=/usr/local/kafka/bin/zookeeper-server-stop.sh Throughout this tutorial, youve learned to set up and configure Apache Kafka on your machine. Since youve created kafka.service and zookeeper.service files, you can also run either of the commands below to stop or restart your systemd-based Kafka server. This cookie is set by GDPR Cookie Consent plugin. Type=simple Youll see the message in the output below because your messages are printed by the Kafka console consumer from the ATA Kafka topic, as shown below. Thanks for sharing. Published:24 February 2022 - 8 min. Reload the systemd daemon to apply new changes. How to Install Apache Kafka on Ubuntu 20.04, Adding Lets Entrypt SSL to Webmin Hostname, How to Generate Random String In JavaScript, S3 & Cloudfront: 404 Error on Page Reload (Resolved), Dockerizing React Application: A Step-by-Step Tutorial, Filesystem Hierarchy Structure (FHS) in Linux, A Comprehensive Guide To macOS Versions And Codenames, How to Install Grunt on Ubuntu 22.04 & 20.04. This behavior can lead to data loss if you have topics that are being written or updated as the process shuts down. How to install git & configure git with github on Ubuntu 20.04. 1. Your email address will not be published. Steps to Remove Roles & Features in Window Server 2019. Hate ads? I am Angelo. Then you need to modify the application configuration file. How to Configure Network with static and dhcp in Linux. systemctl start kafka We use cookies on our website to give you the most relevant experience by remembering your preferences and repeat visits. 6. Kafka also has a command-line consumer to read data from the Kafka cluster and display messages to standard output.

This website uses cookies to improve your experience while you navigate through the website. Now we need to create the service files for zookeeper and kafka so we can start them, stop them, and see their running status. It is written in Scala and Java .It helps to provide a unified, high-throughput, low-latency platform for handling real-time data feeds. Read more Environment="JAVA_HOME=/usr/lib/jvm/java-1.11.0-openjdk-amd64" 5. But to check that Java has been installed successfully, run. This cookie is set by GDPR Cookie Consent plugin. Just what I was looking for. Apache Kafka is perfect for real-time data processing, and its becoming more and more popular. Doing so further improves the security of your Kafka installation. [Install] The cookie is used to store the user consent for the cookies in the category "Performance". Although here I'm just another member of the family. Press Esc to cancel. The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". ExecStop=/usr/local/kafka/bin/zookeeper-server-stop.sh Throughout this tutorial, youve learned to set up and configure Apache Kafka on your machine. Since youve created kafka.service and zookeeper.service files, you can also run either of the commands below to stop or restart your systemd-based Kafka server. This cookie is set by GDPR Cookie Consent plugin. Type=simple Youll see the message in the output below because your messages are printed by the Kafka console consumer from the ATA Kafka topic, as shown below. Thanks for sharing. Published:24 February 2022 - 8 min. Reload the systemd daemon to apply new changes. How to Install Apache Kafka on Ubuntu 20.04, Adding Lets Entrypt SSL to Webmin Hostname, How to Generate Random String In JavaScript, S3 & Cloudfront: 404 Error on Page Reload (Resolved), Dockerizing React Application: A Step-by-Step Tutorial, Filesystem Hierarchy Structure (FHS) in Linux, A Comprehensive Guide To macOS Versions And Codenames, How to Install Grunt on Ubuntu 22.04 & 20.04. This behavior can lead to data loss if you have topics that are being written or updated as the process shuts down. How to install git & configure git with github on Ubuntu 20.04. 1. Your email address will not be published. Steps to Remove Roles & Features in Window Server 2019. Hate ads? I am Angelo. Then you need to modify the application configuration file. How to Configure Network with static and dhcp in Linux. systemctl start kafka We use cookies on our website to give you the most relevant experience by remembering your preferences and repeat visits. 6. Kafka also has a command-line consumer to read data from the Kafka cluster and display messages to standard output. After=network.target remote-fs.target The default Kafka sends each line as a separate message. To mitigate the risk, youll remove the kafka user from the sudoers file and disable the password for the kafka user. But opting out of some of these cookies may affect your browsing experience. It does not store any personal data. If you dont, the process will remain in memory, and you can only stop the process by killing it. The cookie is used to store the user consent for the cookies in the category "Analytics". These cookies help provide information on metrics the number of visitors, bounce rate, traffic source, etc. Want to support the writer? Step 4: Move the extracted folder to /usr/local/.

Now, run the tar command below to extract (-x) the Kafka binaries (~/Downloads/kafka.tgz) into the automatically created kafka directory. Open the kafka.service file in your preferred text editor, and configure how your Kafka server looks when running as a systemd service.

Now, run the tar command below to extract (-x) the Kafka binaries (~/Downloads/kafka.tgz) into the automatically created kafka directory. Open the kafka.service file in your preferred text editor, and configure how your Kafka server looks when running as a systemd service.